WHY

Why an Operating System for Data?

To understand the need for an Operating System for Data, we need to briefly touch base with the roots.

What is an Operating System?

An Operating System abstracts users from the procedural complexities of applications and declaratively serves the outcomes.

The need for a Data Operating System

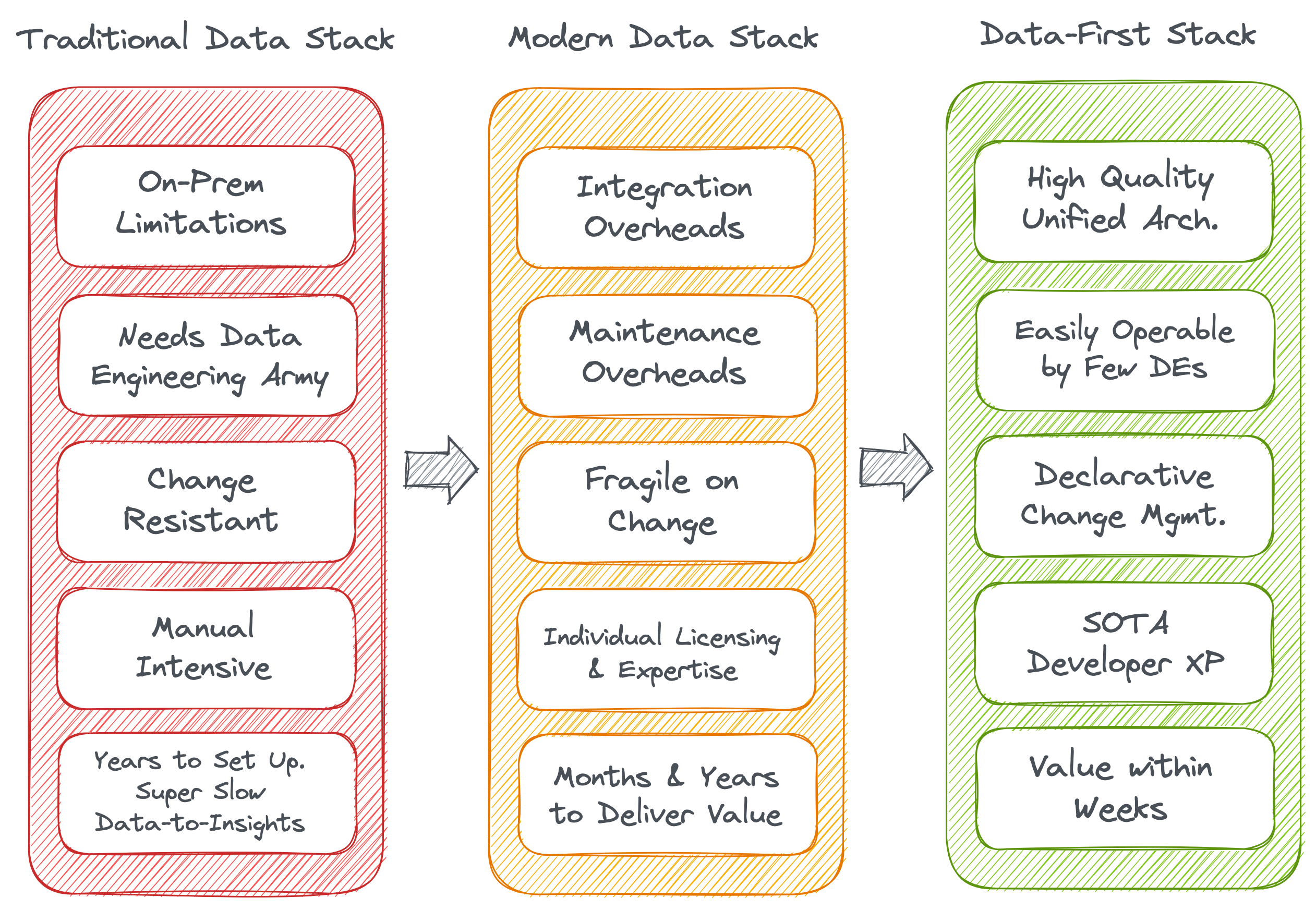

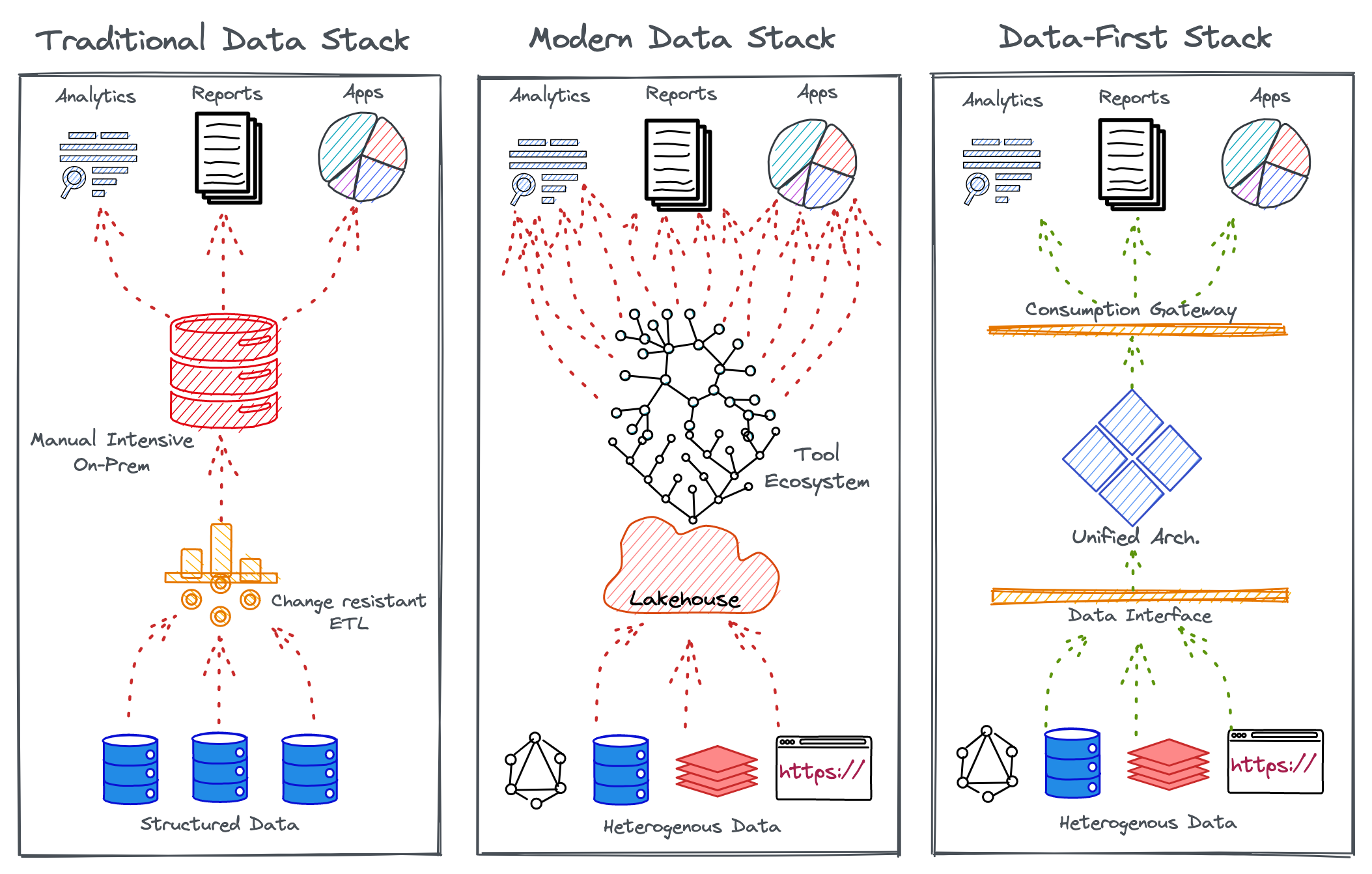

Traditional Data Stack → Modern Data Stack → Data-First Operating System

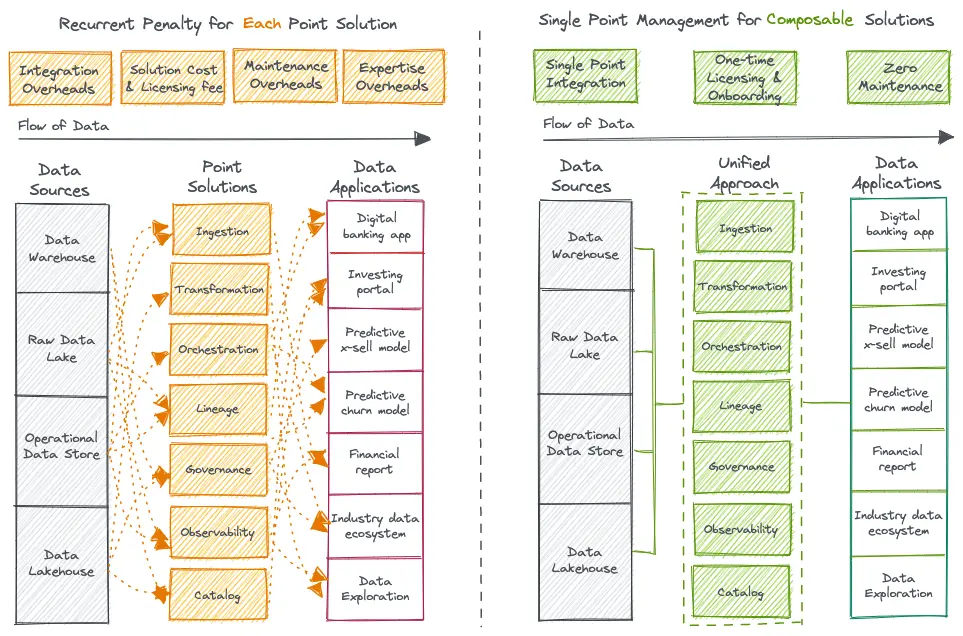

Overheads of Point Solutions

The Modern Data Stack (MDS) is littered with point solutions that come with integration, resource, and maintenance overheads, adding up to millions in investments. The MDS is known to unabatedly break Agile and DataOps principles. Instead of solving, MDS creates siloed data, blotchy insights, and security risks.

Adversities of Data Personas

Governance & Data Quality as an Afterthought

Data governance has been a hot topic for quite some time, but successful implementations are still a myth. It’s a constant struggle to adhere to the organization’s data compliance standards. Lacking governance directly impacts the quality and experience of data that passes through serpentine pipelines blotched with miscellaneous and ad-hoc integration points.

Inferior Developer Experience

WHAT

What is a Data Operating System?

The Data Operating System is a Unified Architecture Design that enables the Data-First Stack to abstract complex and distributed subsystems for a consistent outcome-first experience for non-expert end users.

Mindset Transformation precedes Architecture Transformation.

Back to Basics (Primitives)

Primitives of a Data Operating System

Workflow

Workflow is a manifestation of a Directed Acyclic Graph, which helps you to streamline and automate the process of working with big data. Any data ingestion or processing task in the Data Operating System, irrespective of whether it’s batch or streaming data, are defined and executed through Workflows.

Service

A Service is a long-running process that is receiving and/or serving an API. It’s for scenarios that need a continuous flow of real-time data, such as handling event processing, real-time stock trades, etc. Service makes it easy to gather, process, and scrutinize streaming data so you can react quickly.

Policy

Policies govern the behavior of persons, applications, & services. Two kinds of policies prevail in a Data Operating System. Access policy is a security measure regulating individuals. Data policies describe the rules controlling the integrity, security, quality, and use of data during its lifecycle and state change.

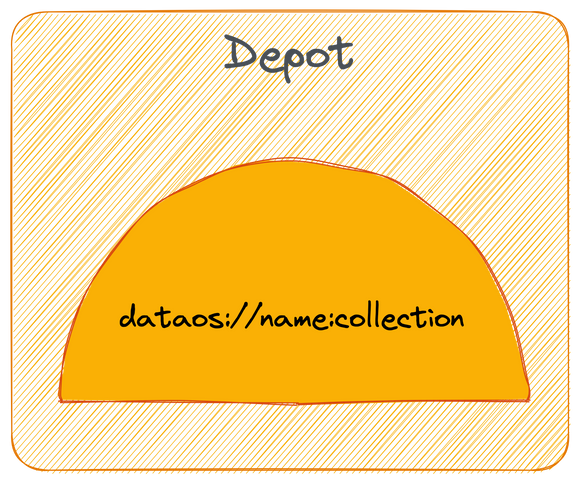

Depot

Depots provide you with a uniform way to connect with a variety of data sources. Depots abstract away the different protocols and complexities of the source systems to present a common taxonomy and route to address these source systems.

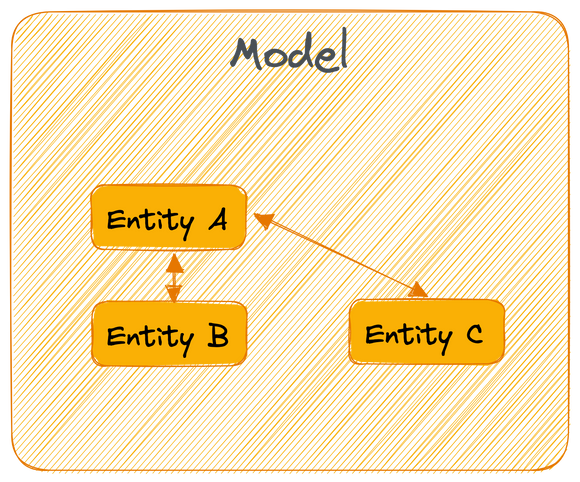

Model

Models are semantic data model constructs devised by business teams to power specific use cases. Models enable the injection of rich business context into the data model and act as an ontological/taxonomical layer above contracts and physical data to expose a common business glossary to the end consumer.

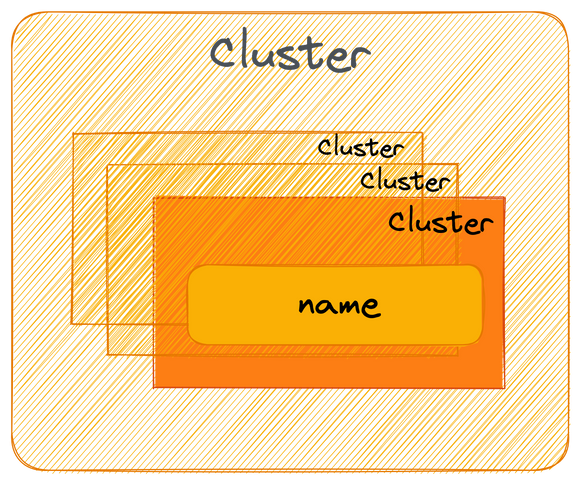

Cluster

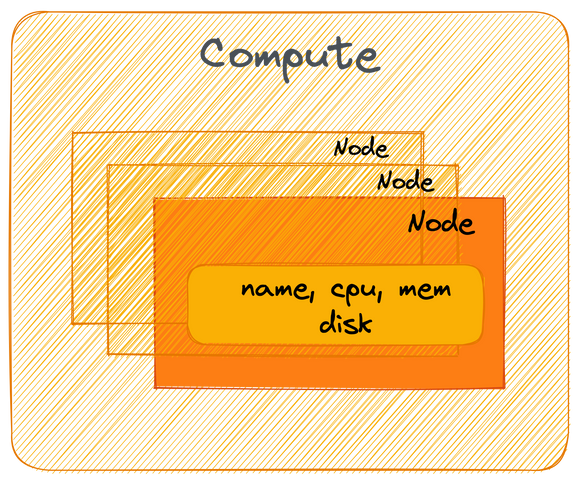

A cluster is a collection of computation resources and configurations on which you run data engineering, data science, and analytics workloads. A Cluster in a Data Operating System is provisioned for exploratory, querying, and ad-hoc analytics workloads.

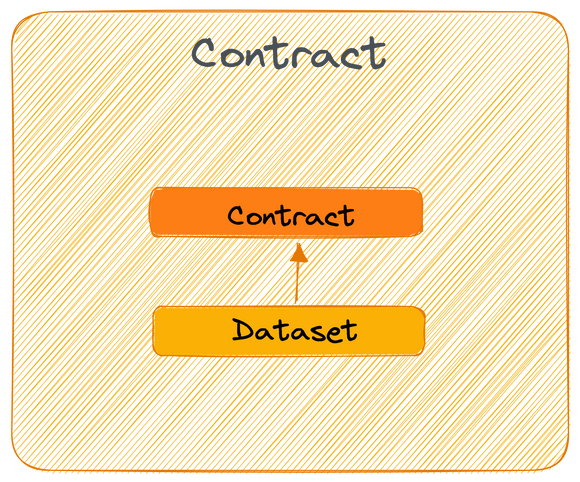

Contract

Contracts are expectations on data in the form of shape, business meaning, data quality, or security. They are guidelines or agreements between data producers and consumers to declaratively ensure the fulfillment of expectations. Contracts can be enabled by any primitive in the Data Operating System.

Secret

Secrets allow you to store sensitive information such as passwords, tokens, or keys. Users can gain access by using the name of the ‘Secret’ instead of using the sensitive information directly. The decoupling from other primitives allows for monitoring and control access while minimizing the risk of data leaks.

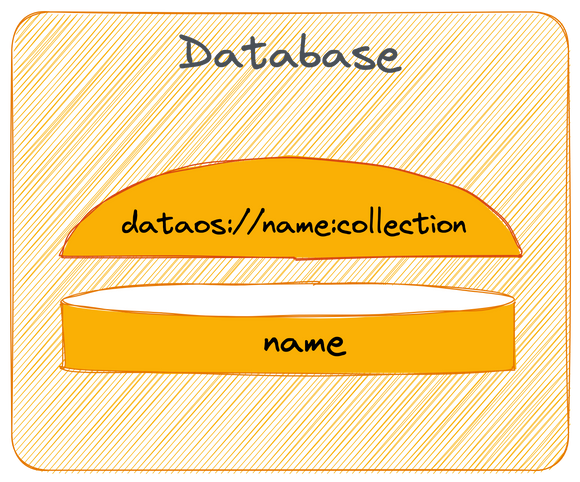

Database

Database primitive is for the use cases where output is to be saved in a specific format in a relational database. This primitive can be used in all the scenarios where you need to syndicate structured data. Once you create a Database, you can put a depot & service on top of it to serve the data instantly.

Compute

Compute resources can be thought of as the processing power required by any data processing workflow/service or query workload to carry out tasks. Compute is related to common server components, such as CPUs and RAM. So a physical server within a cluster would be considered a compute resource, as it may have multiple CPUs and gigabytes of RAM for processing workloads.

Defining principles of a Data Operating System

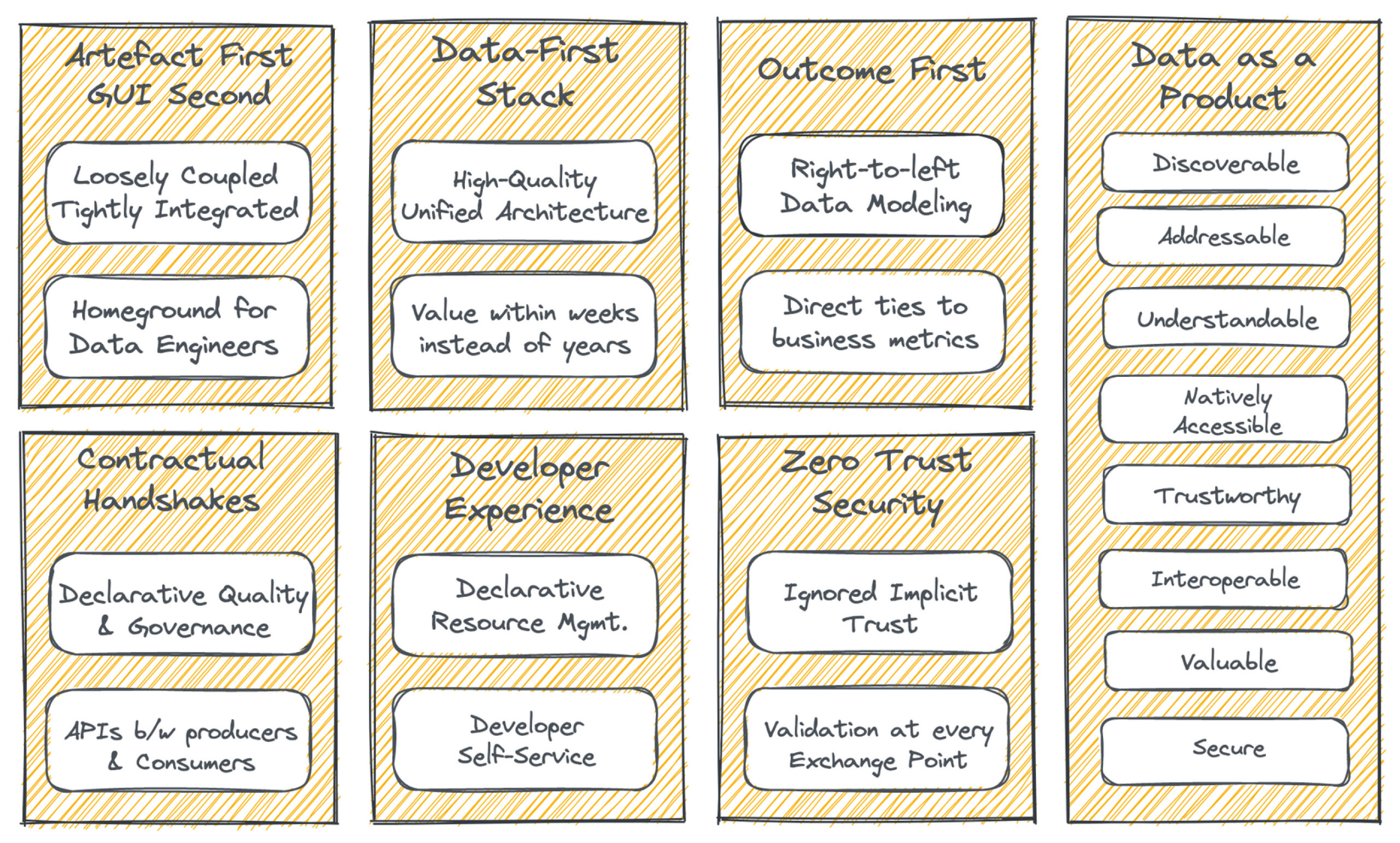

Data as a Product

The most important objective of a Data Operating System is to consistently deliver Data Products. A Data Product is a unit of data that adds value to the end user. A Data Product must be Discoverable, Addressable, Understandable, Natively accessible, Trustworthy, Interoperable-and-composable, Valuable on its own, and Secure. A Data Product is materialized after raw ingested data is passed through the three-tiered Data Operating System architecture.

Artefact first, GUI second

The Data Operating System Architecture is made for Data Developers. With a suite of loosely coupled and tightly integrable primitives, it enables complete data engineering flexibility in terms of how the OS is operationalized. Being Artefact-first with open standards, it can be used as an architectural layer on top of any existing data infrastructure. GUIs are deployed for business users to directly consume potent data.

Developer Experience

The Data Operating System is a programmable data platform that enables state-of-the-art developer experience for data developers. It’s a complete self-service interface for developers where they can declaratively manage resources through APIs, CLIs, and even GUIs if it strikes their fancy. The curated self-service layers made of OS primitives enable a dedicated platform to increase user productivity.

Contractual Handshakes

Contracts in data development are analogous to APIs in software development. A Data Operating System’s primitives directly implement Open Data Contracts, which are expectations on data. It is an agreement between producers and consumers to declaratively ensure the fulfillment of the data shape, quality, & security expectations.

Outcome First

A Data Operating System mercilessly trims down unnecessary sidecars to ensure the results are put first. Every primitive is directly tied to the business purpose through a semantic layer. This establishes a direct positive correlation to revenue since data is selectively managed only for use cases with direct business applications.

Zero trust security

A Data Operating System is suspicious of every event within its ecosystem. It does not accept implicit trust and goes several steps beyond by continuously validating security policies at every exchange point through purpose-driven ABAC policies. Security engines are segregated into central and distributed pockets.

Data-First Stack

The Data-First stack goes exactly by its name and puts data first by abstracting all the nuances of low-level data management, which otherwise suck out most of the data developer’s active hours. A Data Operating System’s data applications consequently optimize resources and ROI.

Conceptual Architecture of a Data Operating System

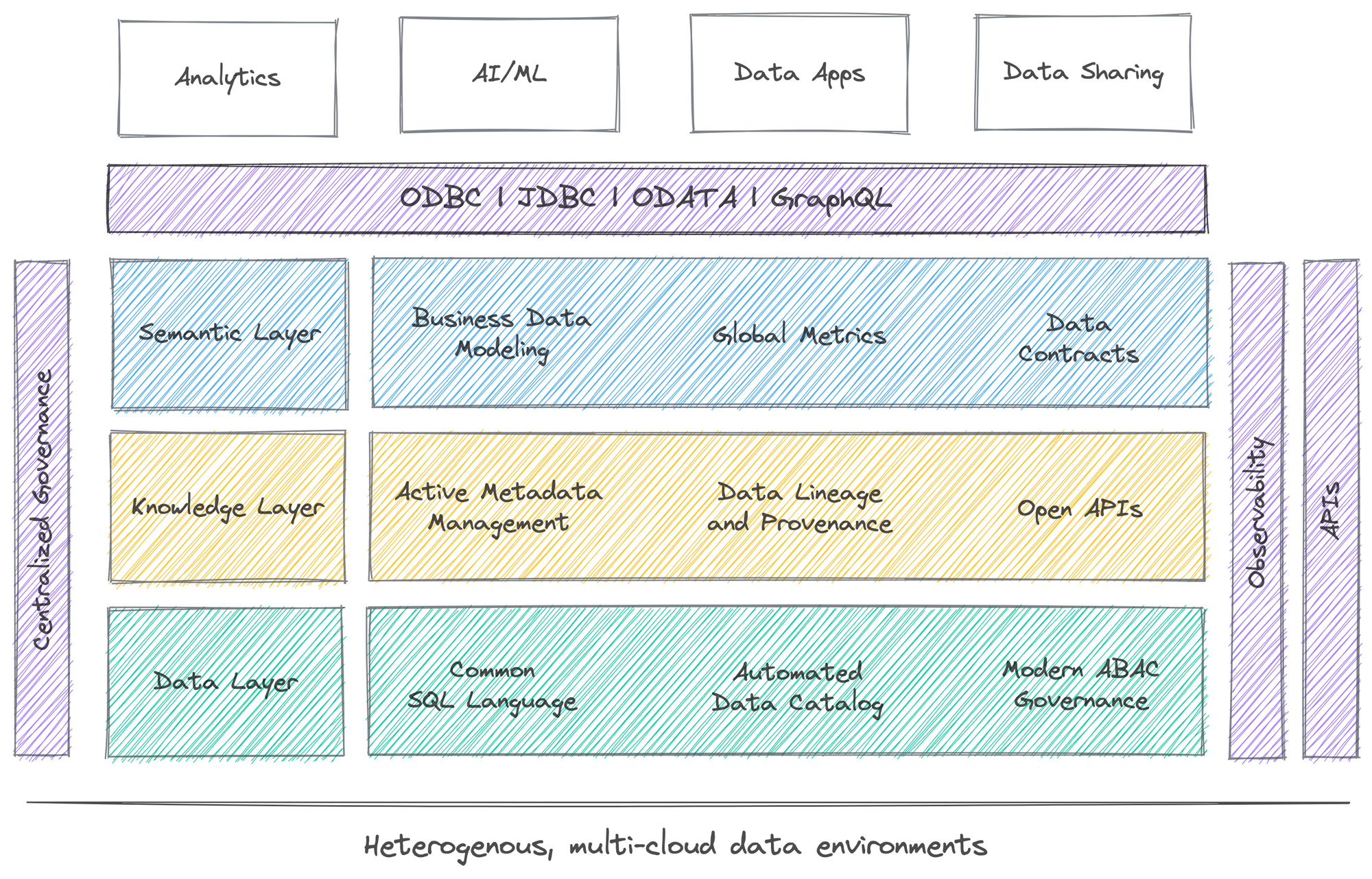

The Three-Tier Logical Infrastructure

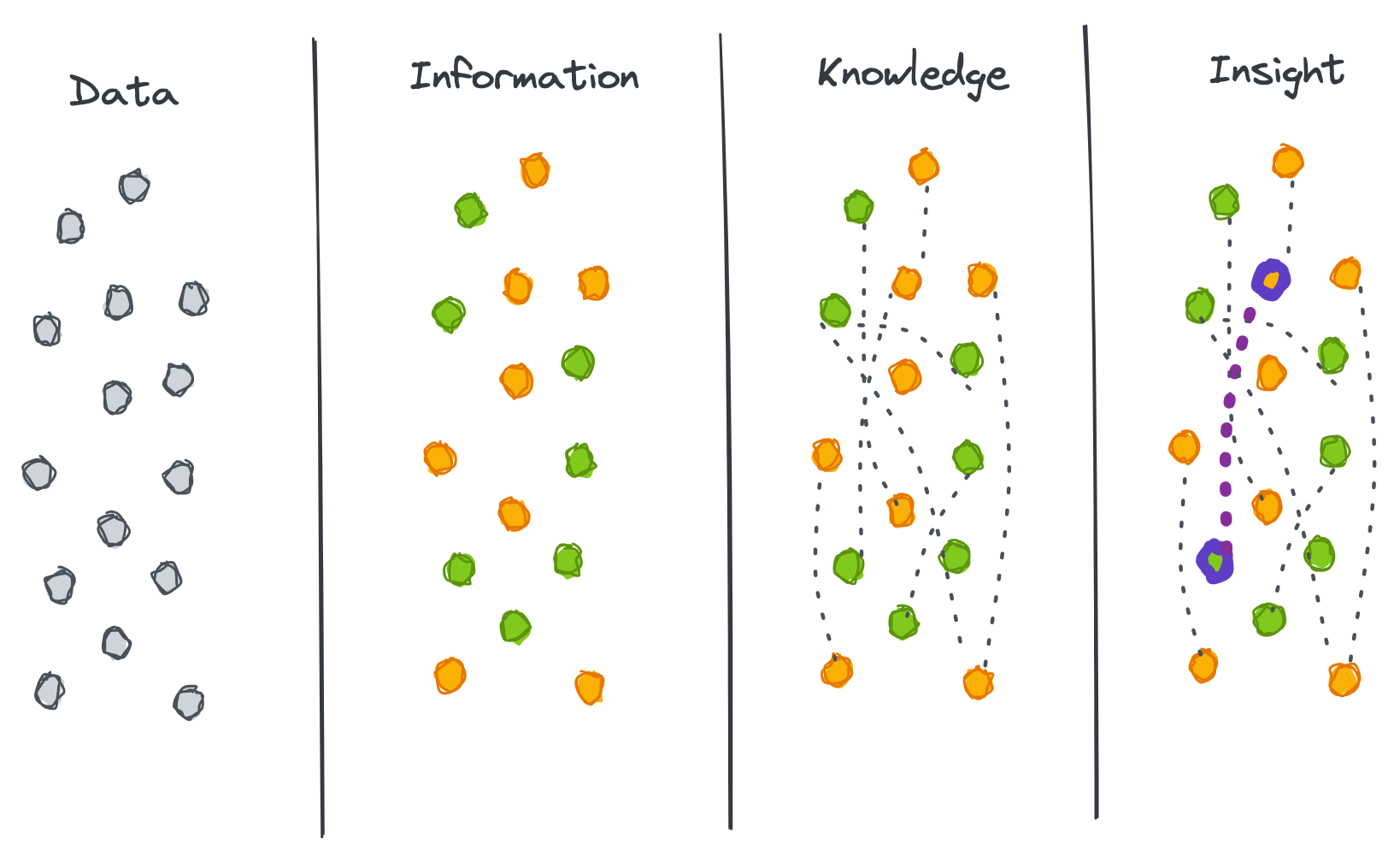

A Data Operating System is conceptually segregated into three logical layers that talk to each other and establish a complete 360-degree data view while ensuring the physical data and logical constructs are safely decoupled. Changes or updates in either layer can selectively be obstructed from corrupting each other. The first layer consumes and maintains physical data, the second layer creates knowledge by detecting active and valuable interrelations within data and metadata, and the third layer empowers a direct tie with business purposes to create insights and wisdom.

Semantic Layer

Knowledge Layer

Data Layer

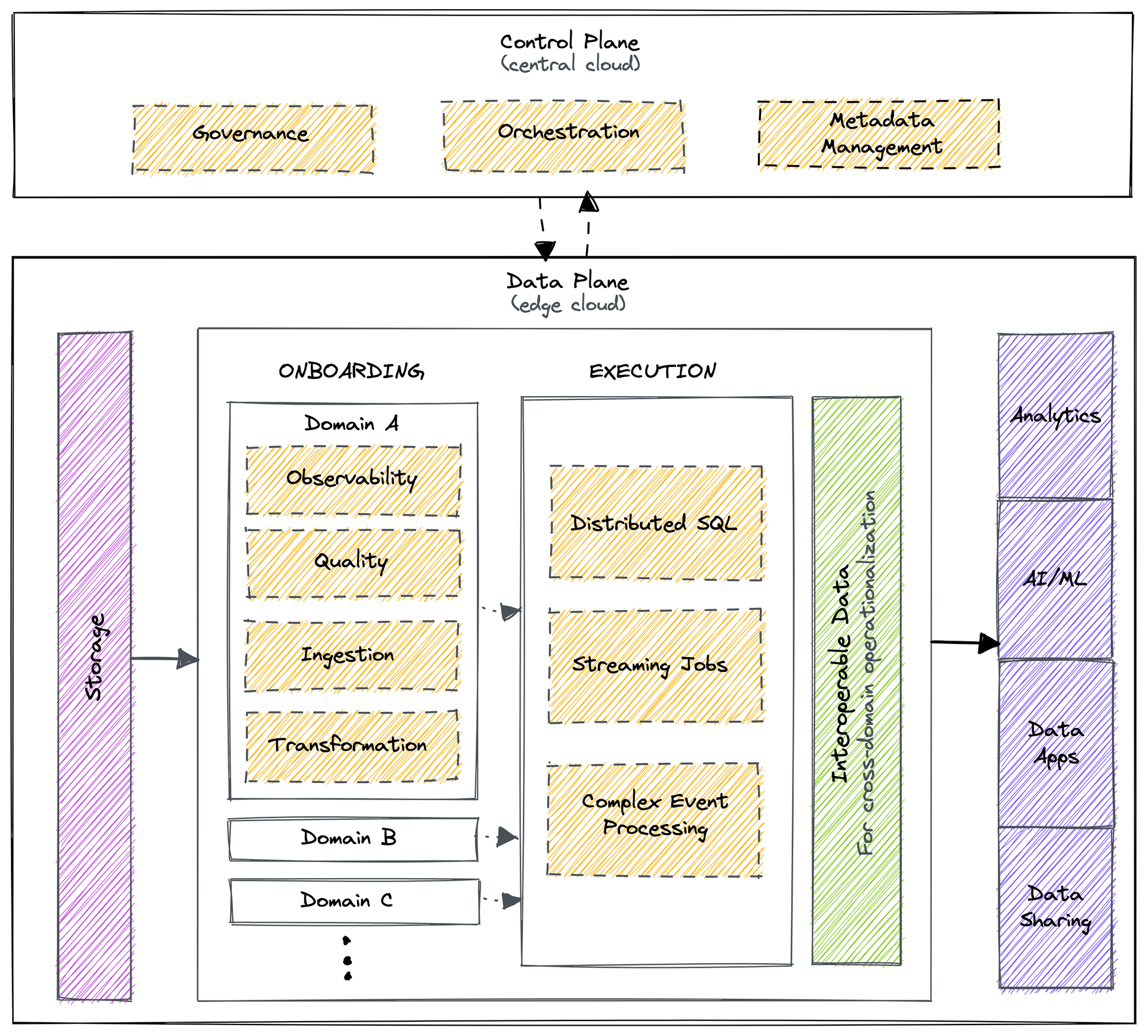

The Central Control Plane & Distributed Data Plane

Control Plane

The Control plane helps admins govern

- Policy-based and purpose-driven access control of various touchpoints in cloud-native environments.

- Orchestrate data workloads, compute cluster life-cycle management, and version control of a Data Operating System’s resources.

- Metadata of different types of data assets.

Data Plane

The Data plane helps data developers to deploy, manage and scale data products.

- The distributed SQL query engine works with data federated across different source systems.

- Declarative stack to ingest, process, and extensive syndicate data.

- Complex event processing engine for stateful computations over data streams.

- Declarative DevOps SDK to publish data apps in production.

HOW

How does a Data Operating System Manifest?

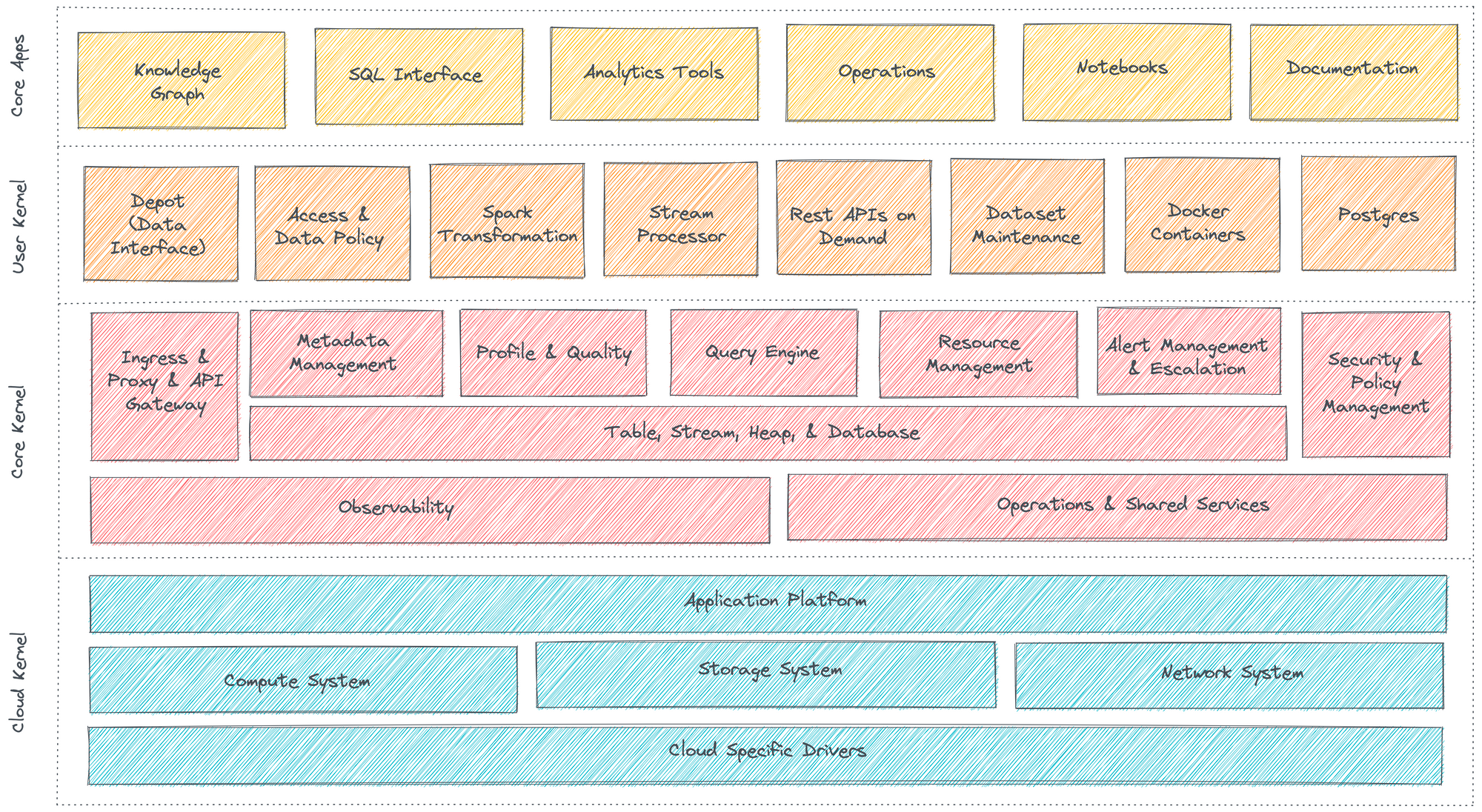

Cloud Kernel

Core Kernel

Core Kernel provides another degree of abstraction by further translating the APIs of the cloud kernel into higher-order functions. From a user’s perspective, the core kernel is where the activities like resource allocation, orchestration of primitives, scheduling, and database management occur. The cluster and compute that you need to carry out processes are declared here; the core kernel then communicates with the cloud kernel on your behalf to provision the requisite VMs or node pools and pods.

User Kernel

COMING UP

Watch this space for more information on the art-of-possible with a Data Operating System

- How does a Data Operating System enable design architectures such as Data Mesh and Data Fabric?

- How does a Data Operating System enable direct business value through constructs such as Customer 360?

- Could a Data Operating System’s primitives be used selectively?

- How does a Data Operating System work with existing Data Infrastructures?

- How does a Data Operating System enable value for organizations that have already instilled multiple point solutions?

- Are there any existing implementations of a Data Operating System?

- Is a Data Operating System relevant for on-premise data ecosystems?